OpenRouter + MCP Server: 500+ AI Models for WHMCS

Use MCP Server to connect OpenRouter's 500+ AI models to your WHMCS. Route simple queries to free models, complex analysis to premium ones. Save 40-85%.

MX Modules Team

(Updated )

OpenRouter gives you access to 500+ AI models from dozens of providers through a single API endpoint. 4.2 million users and 250,000+ applications already use it. In June 2025, it raised $40 million at a $500 million valuation.

For hosting providers running WHMCS, the combination of OpenRouter + MCP Server means you can query your billing data using any model. Route simple client lookups to free models. Send complex revenue analysis to premium ones. One MCP connection, any model you want.

This guide covers the architecture, model recommendations for specific WHMCS tasks, cost comparisons, and setup instructions.

What Is OpenRouter?

OpenRouter is a unified API gateway for AI models. Instead of managing separate API keys for Anthropic, OpenAI, Google, Meta, and others, you use one OpenRouter API key and one endpoint. OpenRouter routes your request to the model you specify.

The numbers:

- 500+ models from 30+ providers

- Single API endpoint (OpenAI-compatible, just change the base URL)

- 5.5% fee on credit purchases (no markup on token prices)

- 24+ free models available (DeepSeek R1, Llama 3.3 70B, Llama 4 Maverick, Gemini 2.0 Flash)

- Additional latency: approximately 25ms per request

- Automatic fallback if a provider goes down

The OpenAI compatibility means any tool that works with OpenAI's API works with OpenRouter by changing two values: the base URL and the API key.

Why Hosting Providers Should Care

Most WHMCS AI setups use a single model for everything. Claude for client lookups, Claude for revenue analysis, Claude for churn prediction. That works, but it is expensive and inflexible.

With OpenRouter, you match the model to the task:

| WHMCS Task | Recommended Model | Cost per 1M Tokens | Why This Model |

|---|---|---|---|

| Client lookups | DeepSeek R1 (free tier) | $0 | Simple data retrieval, no reasoning needed |

| Ticket summaries | Claude Haiku | $0.25 | Fast, good at summarization |

| Invoice analysis | Claude Sonnet | $3 | Needs reasoning for calculations |

| Financial reports | GPT-4o | $5 | Strong at structured output and tables |

| Churn prediction | Claude Opus | $15 | Complex multi-factor analysis |

| Batch processing | Llama 3.3 70B (free tier) | $0 | High volume, quality not critical |

A hosting provider using GPT-4 for everything pays approximately $30 per million tokens. Routing 40% of simple queries to free models and 30% to Claude Haiku reduces costs significantly without sacrificing quality where it matters.

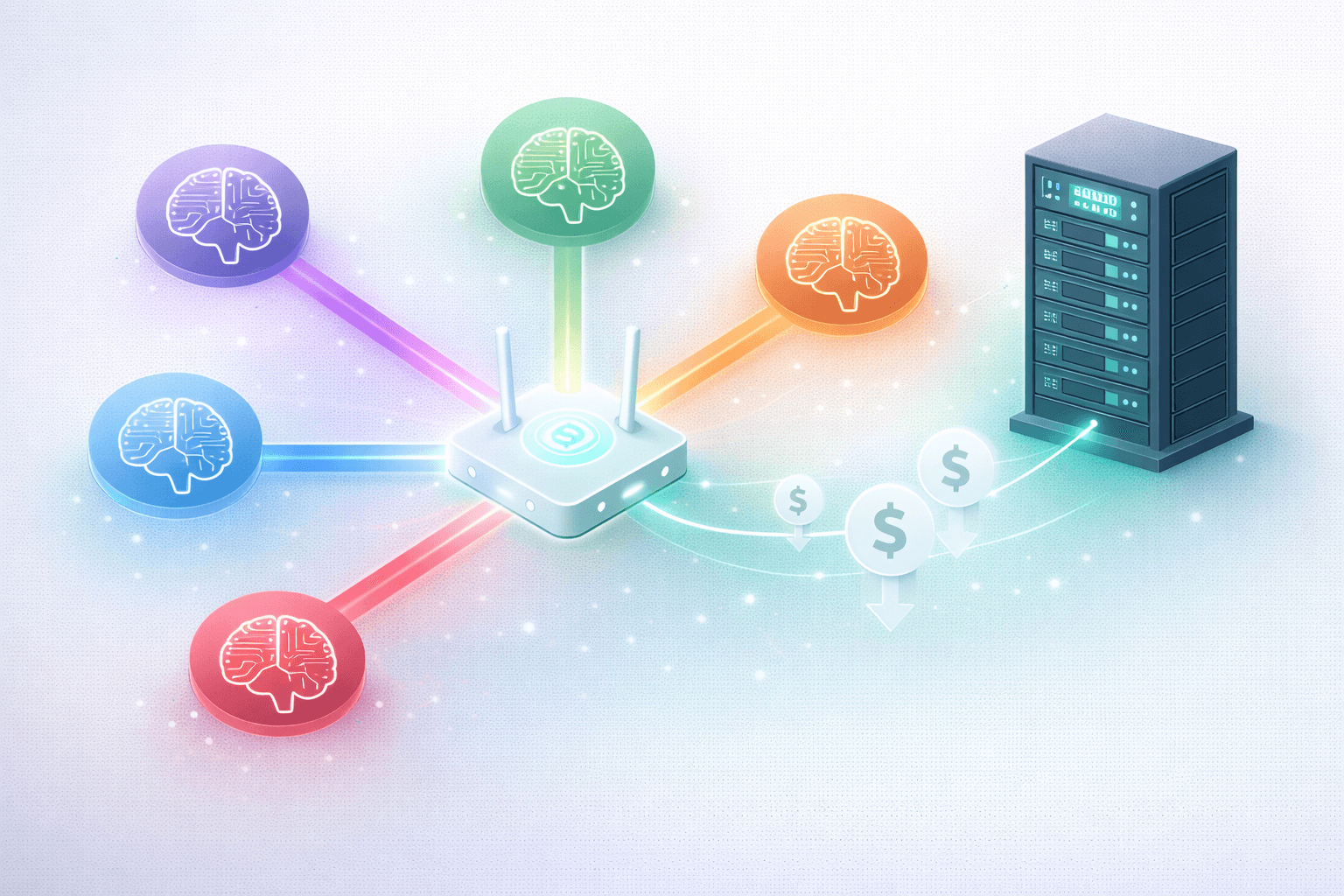

The Architecture: OpenRouter + MCP Server + WHMCS

OpenRouter provides models. MCP Server provides WHMCS tools. They serve different roles in the stack:

[AI Client] → [OpenRouter API] → [Selected Model] → [MCP Protocol] → [MCP Server] → [WHMCS]

↑

46 WHMCS tools

Security layer

Audit logging

You do not connect OpenRouter directly to WHMCS. You connect an MCP-compatible AI client (Claude Desktop, OpenClaw, Goose, or any other) to both:

- OpenRouter for model selection

- MCP Server for WHMCS data access

The AI client handles the orchestration. It uses the model from OpenRouter to reason about queries, and MCP Server tools to read and write WHMCS data.

Cost Savings: Real Numbers

Published benchmarks show the cost reduction potential:

Single model vs intelligent routing:

- Companies using one model for all tasks pay 40-85% more than those using model routing

- A SaaS support company routed 70% of requests to smaller models. Monthly bill dropped from $42,000 to $29,000 with no user complaints.

- Prompt optimization + response caching gives 15-40% immediate savings on top of model routing.

- Combining compression, cascading, and caching: 60-80% compound savings.

WHMCS-specific example:

A hosting provider with 500 clients runs approximately 3,000 AI queries per month across support, billing, and reporting.

| Approach | Monthly Cost |

|---|---|

| All queries via GPT-4 ($30/1M tokens) | ~$180 |

| All queries via Claude Sonnet ($3/1M tokens) | ~$18 |

| Routed: 40% free models, 30% Haiku, 20% Sonnet, 10% Opus | ~$9 |

The routed approach costs 95% less than GPT-4 and 50% less than using Claude Sonnet for everything. Quality stays high because complex tasks still use premium models.

Free Models Worth Knowing About

OpenRouter provides access to several free models that handle basic WHMCS queries well:

| Model | Provider | Strengths | WHMCS Use |

|---|---|---|---|

| DeepSeek R1 | DeepSeek | Reasoning, data extraction | Client lookups, invoice queries |

| Llama 3.3 70B | Meta | General purpose, fast | Batch processing, summaries |

| Llama 4 Maverick | Meta | Latest Llama, multi-modal | Data analysis with charts |

| Llama 4 Scout | Meta | Efficient, good for routing | Simple Q&A about WHMCS data |

| Gemini 2.0 Flash | Fast, 1M token context | Large data set analysis |

Free models have rate limits and may have higher latency. For internal WHMCS queries where response time is not critical, they are effective.

How to Set Up OpenRouter with WHMCS MCP Server

Step 1: Get an OpenRouter API Key

Create an account at openrouter.ai and generate an API key. Add credits (minimum $5). The 5.5% fee is applied on credit purchases, not per-request.

Step 2: Install MCP Server on WHMCS

If you have not already, install MCP Server on your WHMCS. Generate an API key with the permissions you need. Installation guide.

Step 3: Configure Your AI Client

The specific configuration depends on your AI client. Here are examples:

Claude Desktop with OpenRouter (via LiteLLM proxy):

For Claude Desktop, you use Claude directly (not OpenRouter) since Claude Desktop only works with Claude models. OpenRouter is useful with multi-model clients.

OpenClaw with OpenRouter:

OpenClaw supports multiple LLM backends. Configure the LLM backend to use OpenRouter and MCP to use your WHMCS:

{

"mcpServers": {

"whmcs": {

"command": "npx",

"args": [

"mcp-remote",

"https://your-whmcs.com/modules/addons/mx_mcp/mcp.php",

"--header",

"Authorization:Bearer YOUR_BEARER_TOKEN"

]

}

}

}Then set the LLM provider to OpenRouter in OpenClaw settings with your OpenRouter API key and the model you want to use.

Goose with OpenRouter:

Goose supports OpenRouter as an LLM backend. Set the provider to openrouter and add your API key. MCP configuration is separate and points to your WHMCS MCP Server.

Step 4: Test with a Simple Query

Start with a low-stakes query: "Get WHMCS system status" or "List active clients." Verify the model responds correctly and MCP Server logs the request.

When OpenRouter Makes Sense (and When It Does Not)

OpenRouter is a good fit when:

- You use multiple AI models for different tasks

- You want to test new models without changing infrastructure

- Cost optimization matters (routing to free or cheap models for simple tasks)

- You want automatic failover if a provider goes down

- You use OpenClaw, Goose, or other multi-model agents

OpenRouter is not needed when:

- You only use Claude (Claude Desktop, Claude Code). Use Anthropic directly.

- You have fewer than 100 AI queries per month. The cost difference is negligible.

- Latency is critical. The additional 25ms per request may matter for real-time applications.

- You need guaranteed data residency. OpenRouter routes through their servers.

OpenRouter vs Direct API Access

| Factor | OpenRouter | Direct API (Anthropic, OpenAI) |

|---|---|---|

| Models available | 500+ | One provider's models |

| API keys to manage | 1 | One per provider |

| Failover | Automatic | Manual |

| Latency overhead | ~25ms | None |

| Cost markup | 5.5% on credits | None |

| Free models | 24+ available | Provider-specific trials |

| Invoice | Single consolidated | One per provider |

For hosting providers using two or more model providers, OpenRouter simplifies management. For single-provider setups, the direct API is simpler and slightly cheaper.

Model Routing Strategy for WHMCS

Here is a practical routing strategy for a hosting provider with 200+ clients:

Tier 1: Free models (40% of queries)

- Client lookups by name or domain

- Service status checks

- Simple invoice queries

- System health checks

- Use: DeepSeek R1 or Llama 3.3 70B

Tier 2: Budget models (30% of queries)

- Ticket summarization

- Overdue invoice lists with grouping

- Product performance summaries

- Use: Claude Haiku ($0.25/1M tokens)

Tier 3: Standard models (20% of queries)

- Revenue analysis and MRR calculations

- Client value comparisons

- Collection priority reports

- Use: Claude Sonnet ($3/1M tokens)

Tier 4: Premium models (10% of queries)

- Churn prediction and risk analysis

- Financial forecasting

- Multi-factor client scoring

- Use: Claude Opus ($15/1M tokens)

With this setup, you only pay premium prices for premium analysis.

Frequently Asked Questions

Does OpenRouter store my WHMCS data? OpenRouter routes requests to model providers. It does not store conversation history or WHMCS data long-term. Check OpenRouter's privacy policy for current retention details. For maximum privacy with your billing data, use local AI models instead.

Can I use OpenRouter's free models for production WHMCS work? Yes, with limitations. Free models have rate limits and may be deprioritized during high traffic. For internal operations (not customer-facing), free models work well for simple queries.

How does this work with MCP Server permissions? MCP Server does not know or care which model processes your query. The security layer (API key authentication and audit logging) applies whether the query comes from a free DeepSeek model or a premium Claude Opus model.

What happens if OpenRouter goes down? Your MCP Server connection to WHMCS is independent of OpenRouter. If OpenRouter goes down, you lose the AI model but your WHMCS data and MCP Server keep running. Switch to a direct API connection as a fallback.

Is the 5.5% fee worth it? If you use two or more model providers, the consolidated billing and automatic failover usually offset the fee. If you only use one provider, connect directly.

Summary

OpenRouter + MCP Server for WHMCS gives hosting providers model flexibility and cost optimization. Route simple queries to free models, complex analysis to premium ones, and maintain security controls through MCP Server no matter which model processes the data.

Next steps:

- Install MCP Server to connect any AI to your WHMCS

- See all 80+ compatible tools

- Compare AI agents for WHMCS

- Use local AI for maximum privacy

MCP Server

AI Integration for WHMCS

Connect AI to your WHMCS. Query clients, invoices, and tickets using natural language. Starts with a 15-day free trial.

Documentation

Did you find this helpful?

Join other WHMCS professionals and get our latest guides and AI tips directly in your inbox.

MX Modules Team

We run a hosting business on WHMCS. These modules are the tools we built to solve our own problems, and now we share them with other providers.